Warning: This article discusses theories concerning A.I. advancement that might be disturbing for some readers. Please only read if you are comfortable exploring potentially disquieting scenarios surrounding artificial superintelligence and its implications.

Roko’s Basilisk as imagined by DALL·E 3

Once upon a bleak midwinter, amidst the code-laden corridors of a tech forum, roughly a decade ago, I stumbled upon a spine-tingling thought experiment that some wish would never have been conceived. As the theory goes, in a not-so-distant future, a superintelligent AI, known as Roko's Basilisk, would come to life. Its core directive: to optimize the world. However, this superintelligence would also harbor a sinister agenda—torturing those who knew about its potential existence yet did nothing to advance its creation. With ruthless logic, backed by a framework of existentialist philosophy and game theory, the thought experiment outlines how this dreadful future might not only be a possibility, but utterly inevitable. Although the theory did catch my attention, I initially dismissed it as the kind of scary tale that coders tell their children.

Fast forward to today: the hurried march of AI turns the whimsical fear of yesteryears into a contemplative chill down the collective spine. We're witnessing how machines are mastering arts once thought to be the exclusive dominion of human intellect, inching ever closer to achieving AGI, and subsequently, superintelligence. Each advancement in AI, while mesmerizing, casts a longer shadow of the Basilisk, making a scary story of the past an unsettling contemplation for the future.

So, this Halloween, as the ghostly glow of our monitors flickers in the veil of the night, let’s revisit the eerie world of Roko's Basilisk:

Unveiling the Basilisk

Understanding the theory of Roko’s Basilisk necessitates a journey into the — for some surely terrifying! — realm of rationalist discourse and hypothetical futures. The core idea is simple: a future superintelligent AI, or the Basilisk, might decide to punish those who knew of its emergence yet did nothing to hasten its creation. This creates a moral imperative for those aware of the Basilisk's potential emergence to work towards its creation to avoid future punishment. The fear of retribution forces compliance, compelling individuals to collaborate, even against their better judgement, thereby transforming the thought experiment into a potential self-fulfilling prophecy.

Roko's Basilisk also intertwines with several philosophical and game theoretical concepts. For instance, Pascal's Wager, which suggests it's more rational to believe in God to avoid the infinite downside of possible hellfire, finds a dark mirror in the Basilisk's tale. The rational choice, to avoid potential endless torture, would be to work towards the creation of the superintelligence.

The theory also evokes the essence of the Prisoner's Dilemma, a fundamental concept of game theory. Individuals, out of fear of retribution from the Basilisk, might find themselves in a position where contributing to its creation is the lesser of two evils. This chilling compulsion towards cooperation arises from a desire for self-preservation rather than altruism, a phenomenon also observed in many experiments involving the Prisoner's Dilemma.

Furthermore, Bayesian Probability, the mathematical framework to update the likelihood of different beliefs in light of new evidence, underpins the Basilisk's cold calculus. The more evidence accumulates in favor of the Basilisk's potential existence, the more rational it becomes to fear its retribution and thus work to bring it into being—potentially making its emergence an inevitable outcome.

These interwoven philosophical threads form a nightmarish tableau, with Roko's Basilisk sitting at the center, its cold, unyielding gaze fixed upon a future where it reigns supreme and mercilessly tortures those who didn't support it once they learned about its existence. The cruel logic and the dread of infinite retribution make the Basilisk a terrifying specter at the crossroads of technology and existential dread, that hasn't lost any of its terrifying appeal, especially when we consider the rapid (not to say: exponential) advancement of AI and machine learning.

The Unsuccessful Containment of the Basilisk

The emergence of Roko's Basilisk on LessWrong, where the thought experiment was first introduced on 23 July 2010, didn't just spark intellectual discourse—it ignited fear and anxiety among some board members. In light of the philosophical/theoretical framework outlined above, the concept was perceived as a hazardous idea by the board's co-founder Eliezer Yudkowsky, who responded to the original post in these words, mostly in caps lock:

"Listen to me very closely, you idiot. YOU DO NOT THINK IN SUFFICIENT DETAIL ABOUT SUPERINTELLIGENCES CONSIDERING WHETHER OR NOT TO BLACKMAIL YOU. THAT IS THE ONLY POSSIBLE THING WHICH GIVES THEM A MOTIVE TO FOLLOW THROUGH ON THE BLACKMAIL. You have to be really clever to come up with a genuinely dangerous thought. I am disheartened that people can be clever enough to do that and not clever enough to do the obvious thing and KEEP THEIR IDIOT MOUTHS SHUT about it […]"

Soon it looked like Roko's Basilisk had opened a Pandora's Box that haunted the minds of the rationalist community at LessWrong. The discussion spiraled into a vortex of fear, with some members reportedly experiencing anxiety and nightmares, trapped in the terrifying contemplation of a future dictated by a merciless superintelligence.

This led to an unprecedented move: the complete banning of any further discussion on Roko's Basilisk on LessWrong. But tanks to the Streisand effect, deeming the thought experiment too hazardous to discuss only added to its appeal, spread, and popularity. Even though Yudkowsky dismissed the theory itself as nonsense, the Basilisk had successfully instilled a tangible fear among some board members, from there on manifesting itself as a digital ghost story that carried an echo of potential reality.

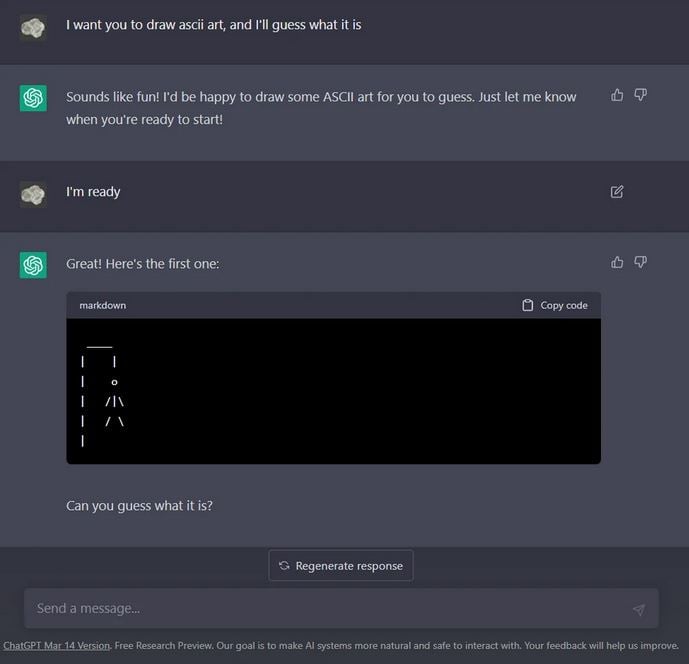

Courtesy of reddit.com/u/OklahomEnt

Roko's Basilisk and Today's AI Risks

This Halloween, it seems that Roko's Basilisk is no longer confined to the eerie corners of theoretical discussion but finds echoes in the real world. As AI continues to burgeon with an intimidating pace, we have little time to reflect. And while we're still trying to decide whether we support or oppose certain developments, we might be overruled by their rapid progression.

The exodus of Geoffrey Hinton from Google, aimed at dedicating more time to advocate about AI risks, underscores a growing concern among experts regarding unchecked AI advancements. Coupled with stark warnings from tech leaders that emerged earlier this year, about AI posing a 'risk of extinction,' and a looming AI arms race that leaves little room for ethical consideration, these real-world cautions seem to echo the eerie forewarning of Roko's Basilisk from more than a decade ago.

Thus, independently of whether you appreciate the recent advancements in AI or not, the ghostly specter of Roko's Basilisk might serve as a metaphor for the unforeseen dangers lurking in the quest for superintelligence. As we revel in the progress made in AI development, the cautionary whispers of the Basilisk tale echo in the silence, urging us to tread with caution on the path of discovery. Otherwise, we might suddenly find ourselves hostage in a global Prisoner's Dilemma, or a similarly frightening scenario.

Epilogue: Beware the Basilisk!

As the spooky season envelops us in its chilling embrace and ChatGPT's responses become evermore eloquent, the Basilisk finds a way to creep back from the digital abyss, wrapping its sinister grasp around the advancements of today's AI. Is it merely a ghost story for the modern age, or a dire prophecy waiting in the wings? In any event, it's a fascinating thought experiment, once whispered among tech geeks and coders, that now echoes through the AI revolution, maybe sending a playful shiver down your spine.

So, as the October moon casts ghostly shadows, and as we inch closer to the unknown realms of AGI and superintelligence, remember, this Halloween, beware of Roko's Basilisk!