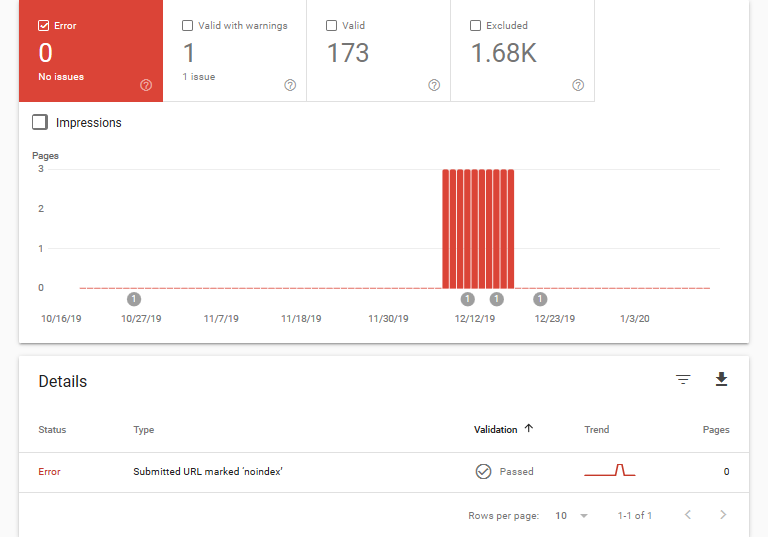

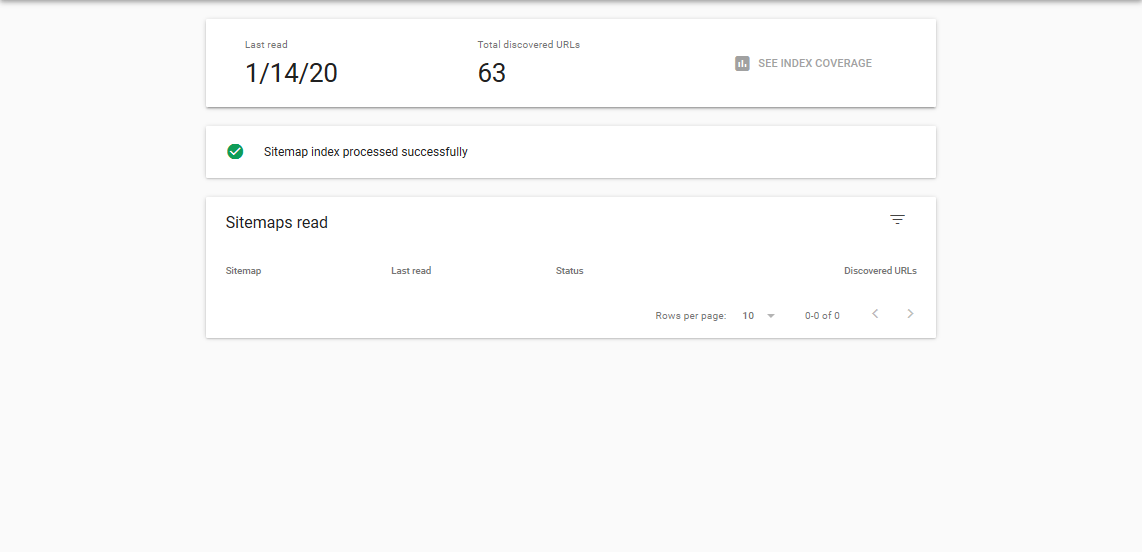

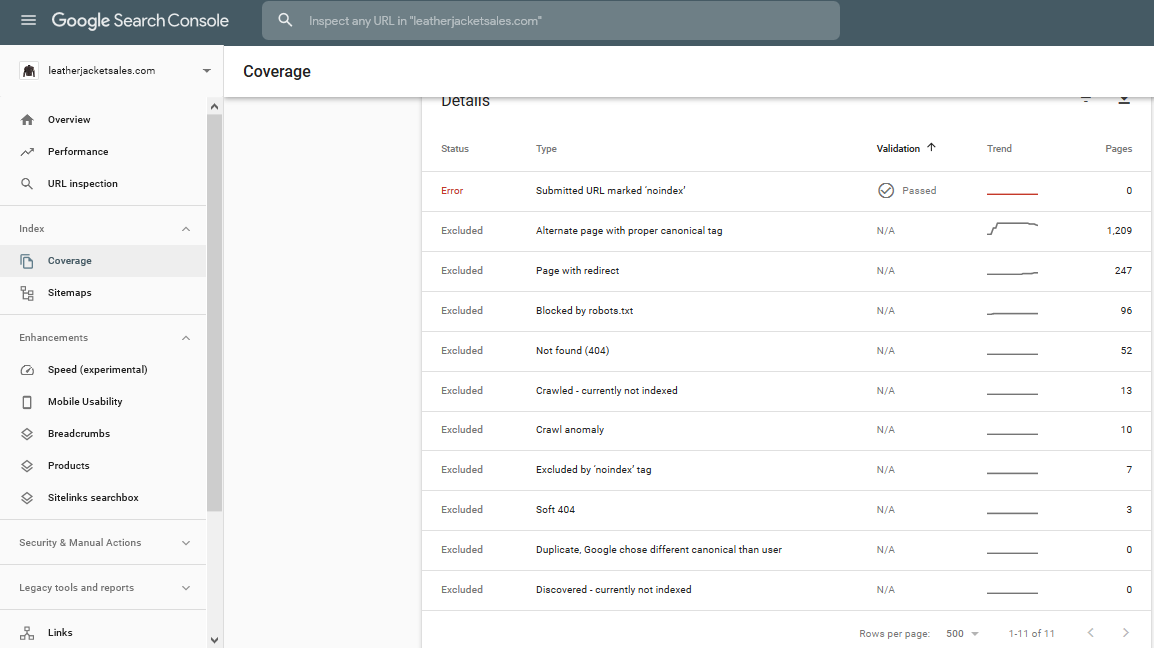

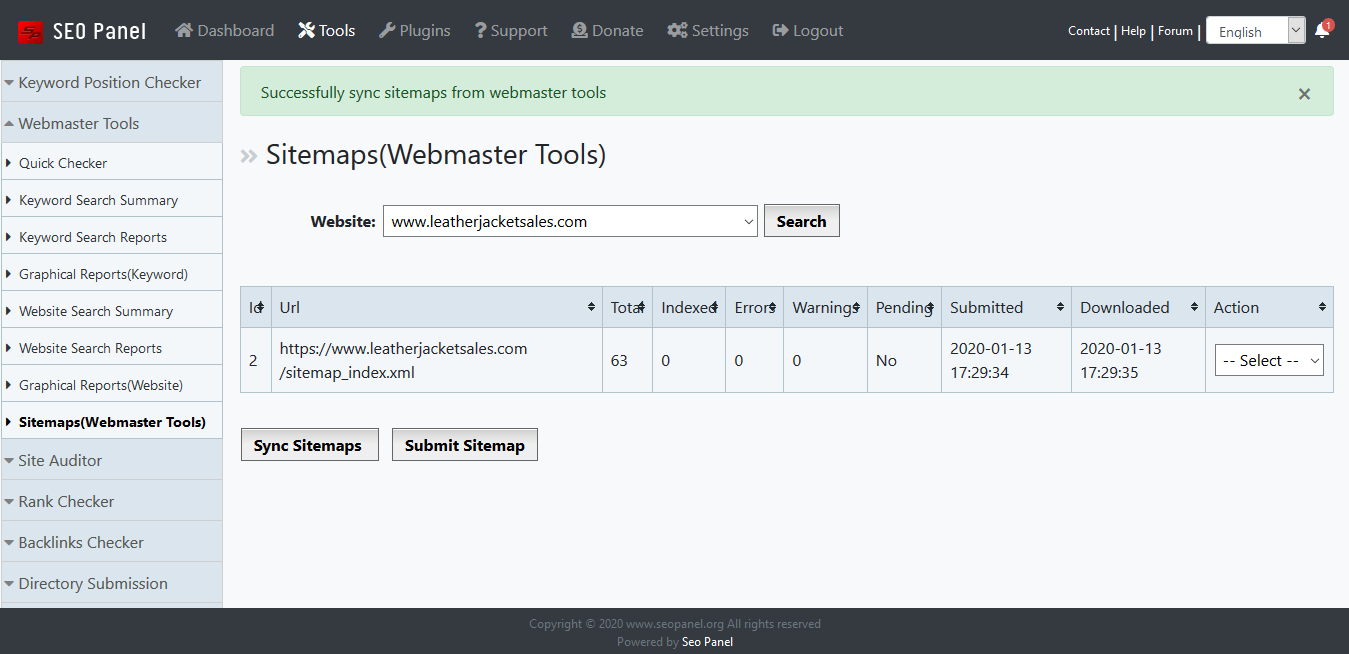

Rcently i posted to many platforms but not sufficiant ansewre was given, can some someone tell me why google has removed my website from search, i have right pagesa and tested on many seo tools everything is fine still google remove my site, does it because of cloud flare, someone said it's because of bad nighbourhood, can any one suggest me the solution my site is https://www.leatherjacketsales.com/

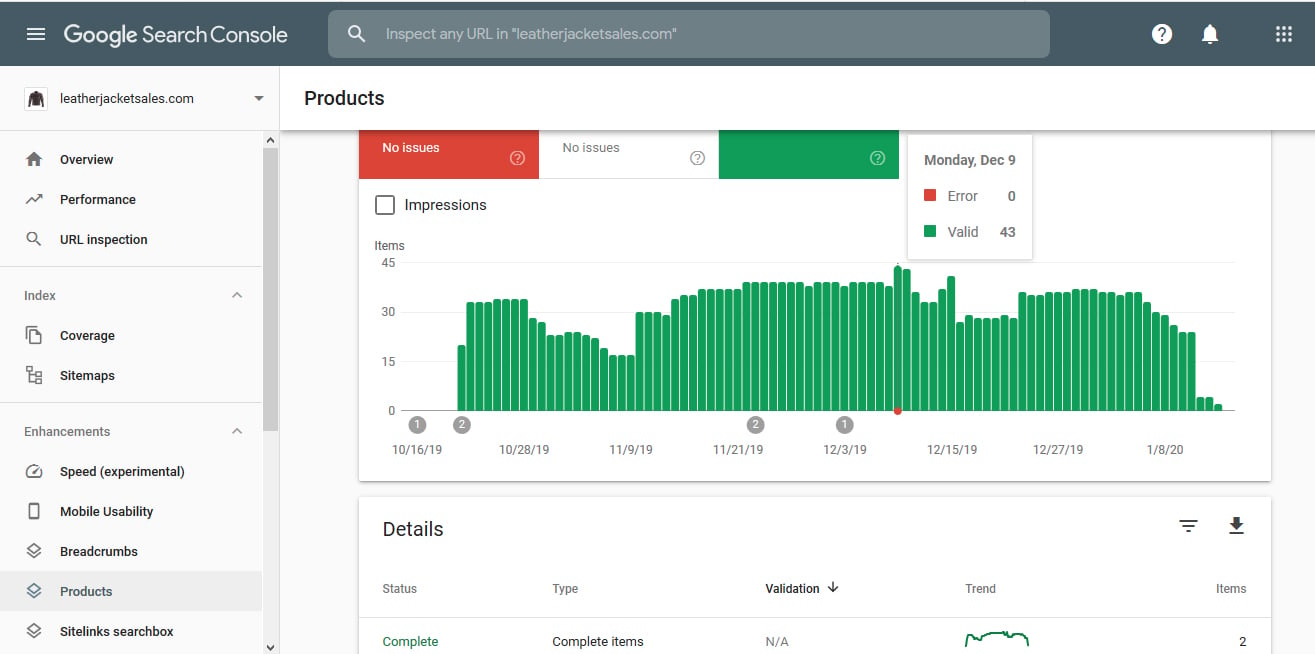

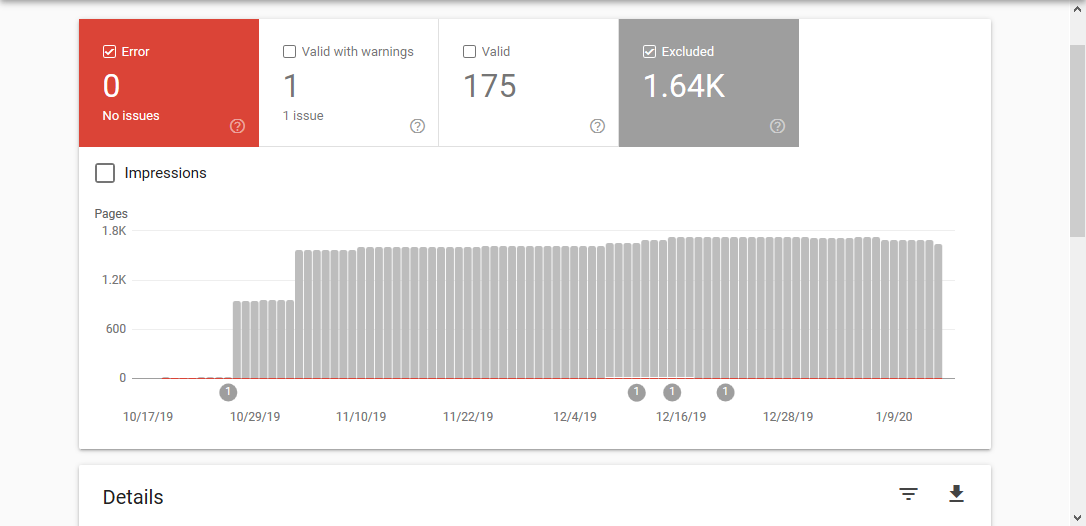

If you check image on Dec 9 2019 here was 43 products and now on 15 Jan 2020 within month almost all site has been deindexed for unknown reason.